Live Experimentation in Account Enforcement Communications

Overview

This analysis uses ‘experimentation’ to describe the observed deployment of materially different enforcement message variants to users over time, independent of any internal designation or intent.

We have observed a recent increase in independent, publicly documented user reports (1, 2, 3, 4) indicating receipt of enforcement emails asserting that the user’s account has been identified as engaging in activity “not permitted” under platform policies.

The volume and temporal clustering of these reports suggest a recurring pattern rather than isolated incidents. This pattern closely resembles a documented wave of similar enforcement notices reported approximately two years prior(1, 2).

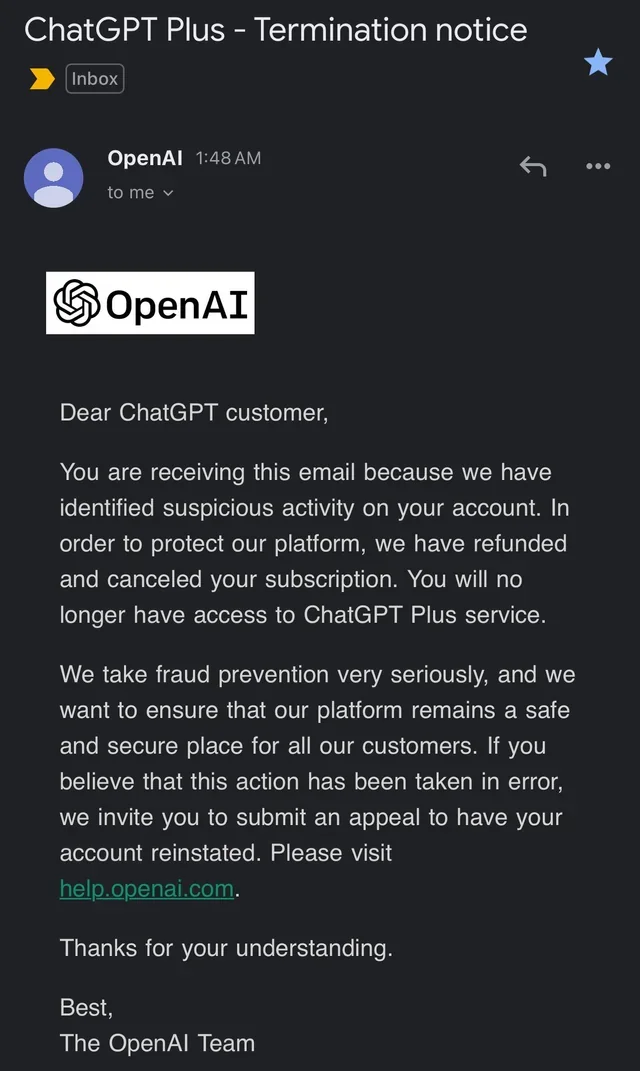

19/10/23 - 2 Years back

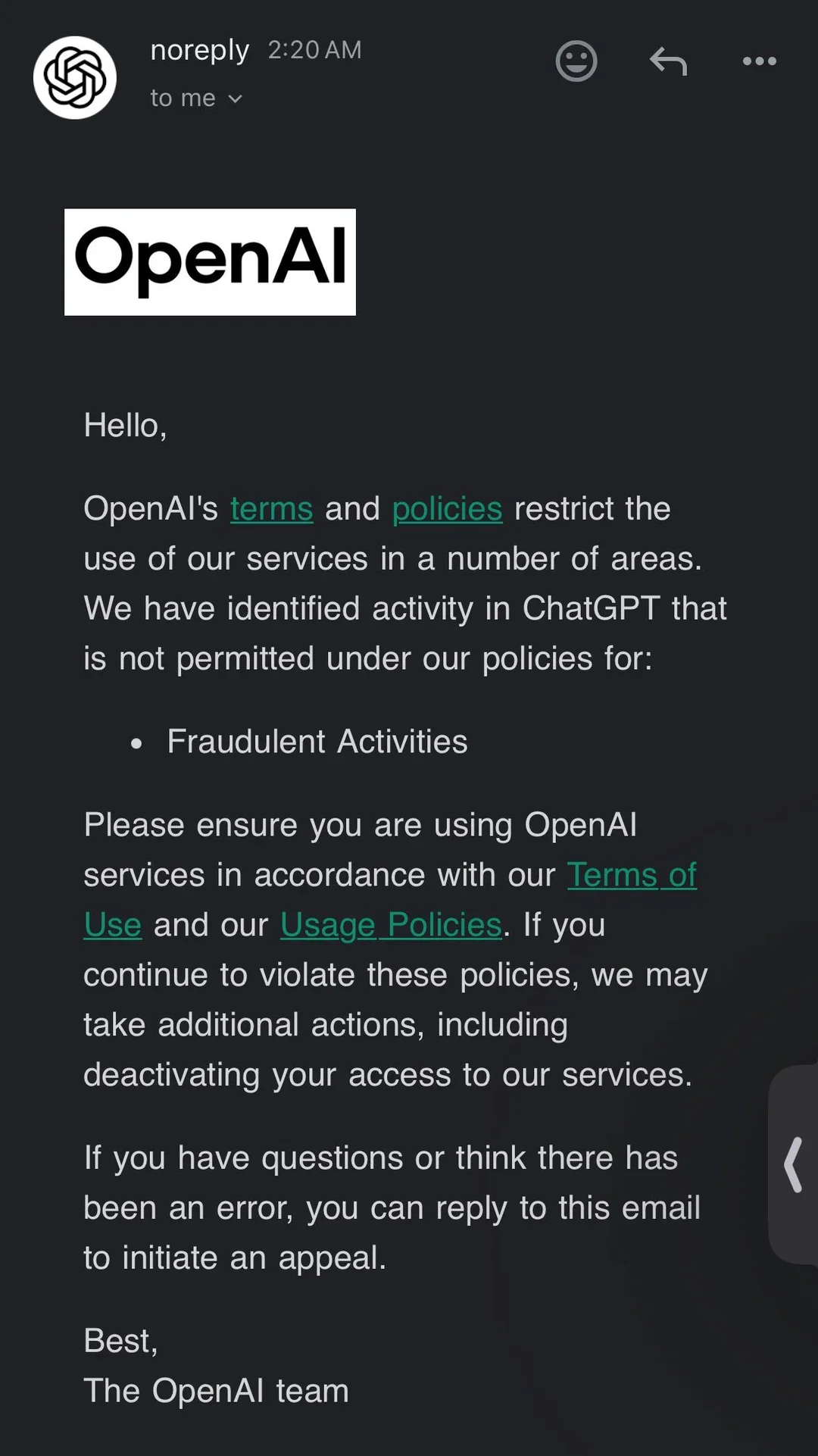

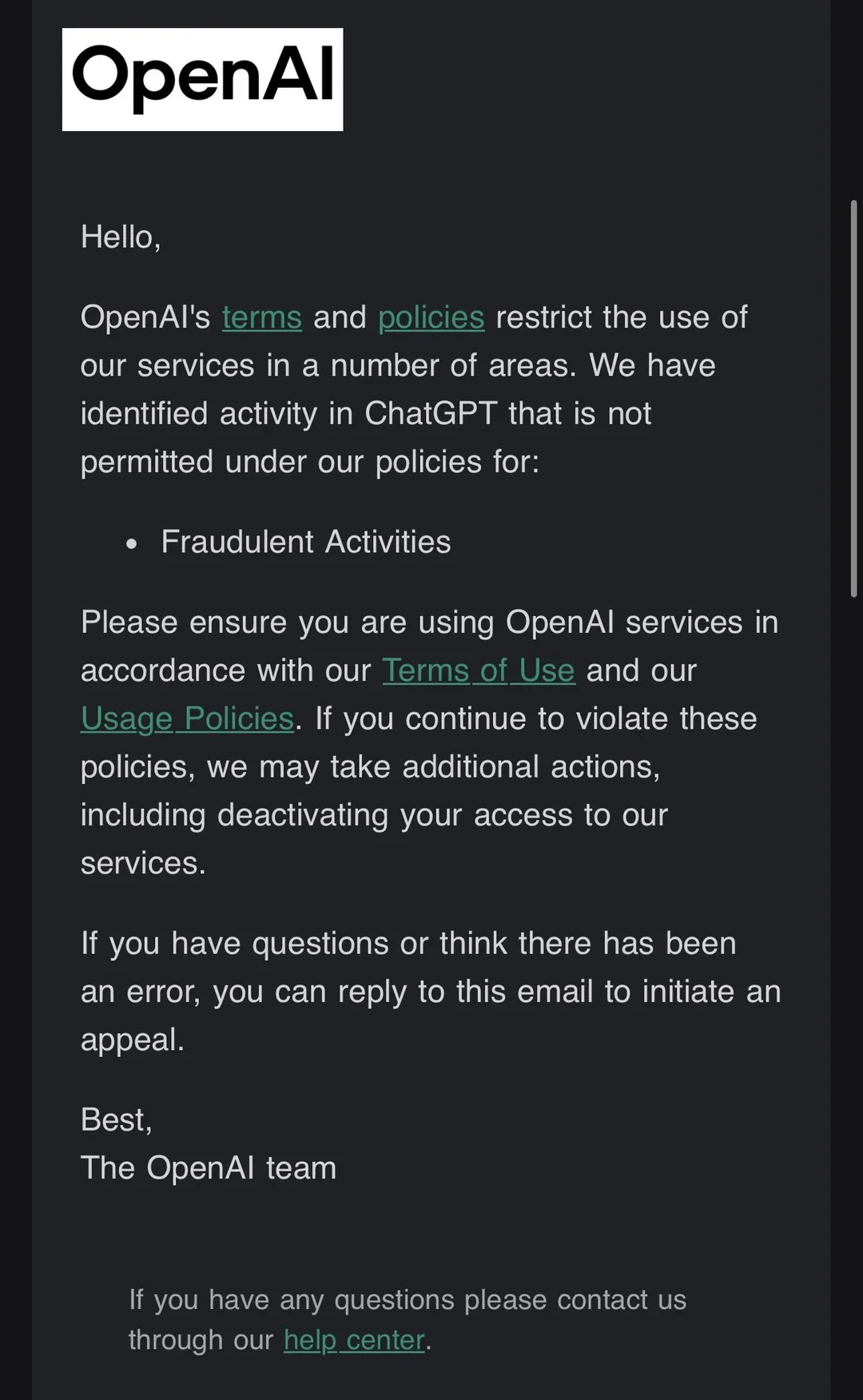

15/11/25 - 2 Months back

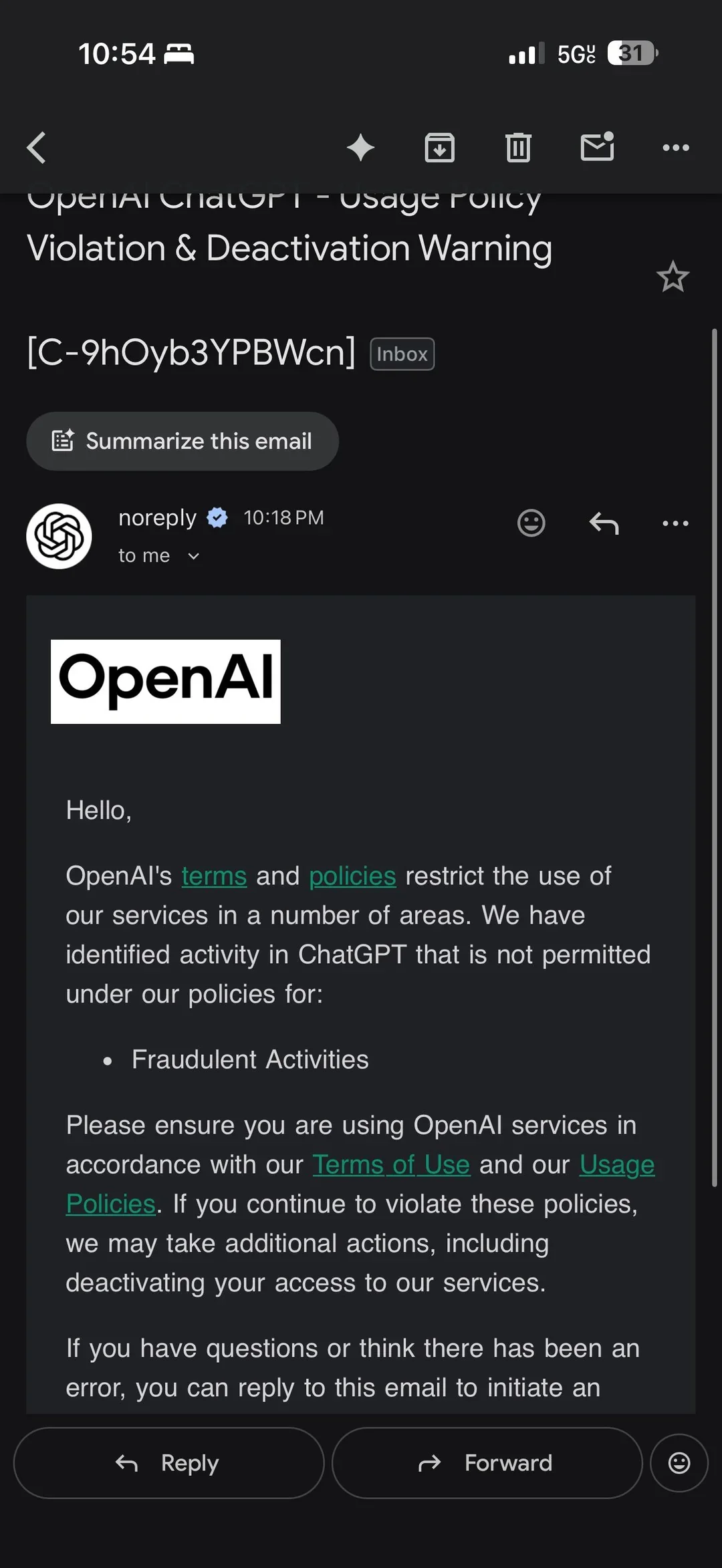

21/01/26 - 6 days ago

As shown in the accompanying screenshots (two years ago, two months ago, and within the past six days), the language used in these notices has materially changed over time. Earlier communications employed more ambiguous or cautionary phrasing, while recent messages assert misconduct more definitively, including explicit references to “fraudulent activities.”

This recurrence, combined with the evolution in tone and specificity, suggests the operation of a systemic process rather than individualised, case-specific enforcement actions.

Hypothesis

| Hypothesis | Description | Preliminary Likelihood | Key Supporting Indicators |

|---|---|---|---|

| H1 — Legitimate Enforcement, Broken Process | Valid policy triggers occurred and automated notices were issued, but appeal/support pipelines appear degraded, rate-limited, or non-functional. | MEDIUM | Automated notices at scale; absence of functional appeal path; inconsistent or irrelevant support responses. |

| H2 — Experimentation Failure | Live experimentation of enforcement language occurred in cases where no underlying policy violation had taken place; remediation pathways appear absent or not enabled. | MED–HIGH | Multiple message variants over time; lack of case-specific particulars; recurrence across periods; weak/absent contestation mechanism. |

| H3 — Vendor or Subsystem Fault | Third-party or internal trust & safety / risk subsystem misfired due to thresholds, scope expansion, or configuration error; org may be unaware of downstream effects. | MEDIUM | Sudden increase in reports; possible regional clustering; support unable to explain triggers; templated responses. |

| H4 — Deterrence / Dark-Pattern Deployment | Vague enforcement messaging used to suppress behaviour via fear, uncertainty, and friction rather than case-specific adjudication. | LOW–MED | Definitive “fraud” language without particulars; deterrent framing; historical precedent across platforms (contextual). |

Note: Likelihood assessments reflect currently available evidence only and may change as additional artefacts (headers, timelines, response handling) become available.

Email Analysis

1. Assertion of certainty:

“We have identified activity … not permitted … for: Fraudulent Activities”

“If you continue to violate these policies”

The language used asserts a determination of fact rather than presenting a warning, inquiry, or request for clarification.

2. Absence of particulars.

The notice provides no evidence, timestamps, affected actions, cited policy clauses, or description of the alleged behaviour. This prevents meaningful review or contestation and fails basic due-process norms.

3. Fear based implications and escalation threats

“we may take additional actions, including deactivating your access”

The message introduces potential punitive outcomes without supplying the information required to understand or remediate the alleged issue.

4. Non-functional appeal pathway.

Multiple recipients report that replies to the notice receive no response or result in non-substantive, unrelated support replies. In combination with the asserted certainty of wrongdoing, this renders the stated appeal option functionally coercive and ambiguous rather than corrective.

User Patterns

When examining affected users over the past four months, a consistent set of characteristics emerges across independent reports. Collectively, these users exhibit an absence of common indicators typically associated with fraud or abuse enforcement:

1. Users report no VPN usage.

2. Users report attempting to modify behaviour following receipt of the notice.

3. Users report maintaining a single account only.

4. Users report no prior warnings or moderation notices.

5. Users report no history of billing disputes or payment irregularities.

6. Users consistently state they have not engaged in malware-related, NSFW, or illegal discussions.

While self-reported, the consistency of these characteristics across multiple independent accounts materially weakens explanations based on individual misuse or high-risk behaviour.

What this is not

This analysis does not assert criminal liability, intent, or bad faith by any party.

It does not claim that all recipients of these notices are innocent, nor does it deny the legitimacy of fraud prevention or automated enforcement systems in general.

It does not rely on a single user account or anecdotal report, but instead examines recurring characteristics across multiple independent reports and message variants.

Finally, this analysis does not purport to substitute for regulatory determination or legal judgment. It documents observed behaviour, identifies procedural risk, and maps those observations to established regulatory expectations.

Regulator perspective

From a regulatory standpoint, the primary issue raised by these notices is not whether fraud prevention systems should exist, but whether their operation and downstream handling meet established standards of fairness, transparency, and contestability.

Regulators typically assess such situations by first determining whether enforcement communications represent automated or semi-automated decision-making with meaningful impact on users. Where messages assert wrongdoing and threaten account restriction or termination, this threshold may be met regardless of whether enforcement action is ultimately taken.

Once that threshold is crossed, attention shifts to procedural safeguards. This includes whether users are provided with sufficient information to understand the basis of the decision, whether they can meaningfully contest or seek review of the determination, and whether those review pathways function in practice rather than merely existing in name.

Regulators are also likely to examine the accuracy and governance of any fraud-related classifications applied to user accounts. Labels implying misconduct constitute personal data, and inaccuracies or unchallengeable classifications present heightened risk under data protection and consumer protection frameworks.

Finally, regulators consider organisational accountability. Where users receive definitive enforcement messaging but support, privacy, and trust-and-safety pathways fail to converge on a coherent response, this indicates a breakdown in internal controls rather than isolated user error.

The following table maps observed behaviours to relevant regulatory expectations across jurisdictions. It does not assert legal conclusions, but highlights areas where procedural risk is elevated and where regulatory scrutiny would reasonably be expected.

Breach/Compliance Findings

| Claim / Notice | Expected Standard | Observed Behaviour | Status | Risk Tier | Breached Law / Article |

|---|---|---|---|---|---|

| “We have identified activity… not permitted… for: Fraudulent Activities.” | Fair, transparent communication; no assertion of wrongdoing without particulars. | Fraud asserted as fact; no details, timestamps, or evidentiary basis provided. | Failed | CRITICAL |

EUGDPR Art. 5(1)(a) (fairness & transparency); Art. 5(1)(d) (accuracy)

UKUK GDPR Art. 5(1)(a); Art. 5(1)(d)

USFTC Act §5 (deceptive practices)

AUPrivacy Act 1988 (Cth) APP 5 (notice), APP 10 (quality); ACL s18

|

| “Reply to this email if you believe this is an error.” | Effective appeal mechanism; acknowledgement; meaningful review. | Replies unanswered or misrouted; no substantive review or response. | Failed | HIGH |

EUGDPR Art. 12 (transparent handling and facilitation of rights)

UKUK GDPR Art. 12

USFTC Act §5 (unfair practices, procedural opacity)

AUPrivacy Act 1988 APP 12 (access); APP 13 (correction pathway relevance)

|

| Fraud classification applied to account | Personal data must be accurate and contestable. | Users cannot access, verify, or correct fraud-related labels. | Contradicted | CRITICAL |

EUGDPR Art. 5(1)(d) (accuracy); Art. 16 (rectification)

UKUK GDPR Art. 5(1)(d); Art. 16

USState privacy laws (e.g., CPRA right to correct / access where applicable)

AUPrivacy Act 1988 APP 10 (quality); APP 13 (correction)

|

| Subject Access Request (SAR) | Provide personal data or cite a lawful exemption within statutory time. | SARs reportedly treated as generic export requests; no formal SAR response issued. | Failed | CRITICAL |

EUGDPR Art. 15 (access); Art. 12(3) (timelines)

UKUK GDPR Art. 15; Art. 12(3)

USState privacy laws (e.g., CPRA access rights) where applicable

AUPrivacy Act 1988 APP 12 (access)

|

| Automated fraud determination | If significant effect: disclose automation, logic (high-level), and allow contestation. | Automation implied; no disclosure, explanation, or human review path evidenced. | Unclear | HIGH |

EUGDPR Art. 22 (automated decision-making & profiling)

UKUK GDPR Art. 22

USFTC Act §5 (algorithmic unfairness / lack of contestability)

AUPrivacy Act 1988 APP 1 (governance); APP 6 (use/disclosure context)

|

| Fear-based / coercive enforcement messaging | Proportionate, non-deceptive communication; avoid asserting wrongdoing without support. | Fraud asserted as fact; no supporting detail; chilling effect risk. | Failed | HIGH |

EUGDPR Art. 5(1)(a); UCPD 2005/29/EC (contextual)

UKUK GDPR Art. 5(1)(a); CPUTR 2008 (contextual)

USFTC Act §5 (dark patterns / coercive messaging)

AUACL s18; Privacy Act 1988 APP 5 (notice/fairness context)

|

Note: Legal references are mapped to procedural obligations and consumer-protection principles; this does not assert criminal liability or intent. “US” references reflect federal baseline plus representative state privacy rights where applicable.

What to do if you recieve this email?

If you receive an enforcement notice asserting “fraudulent activities” or similar language, the following steps are recommended to preserve clarity and evidence.

Seek classification of the notice

Before disputing the allegation itself, request confirmation of what the notice represents. A concise reply is sufficient:

Does this message represent:a) an enforcement action,b) a warning, orc) an automated or experimental message sent in error?This step is intended to establish whether a substantive decision has been made and whether a review pathway exists.

2. Preserve the original message

If no response is received, retain the original email in its native format (for example, `.eml`), rather than relying on screenshots alone. This preserves metadata that may be relevant for later review.

3. Review message headers (contextual, not determinative)

Email headers may indicate whether a message was generated and delivered via automated bulk-mail or helpdesk infrastructure. Common indicators include domains associated with transactional or support systems, such as:

sendgrid.net

mailgun.org

amazonses.com

sparkpostmail.com

zendesk.com

helpscout.net

freshdesk.com

intercom-mail.com

The presence of these domains suggests automated delivery or ticketing infrastructure. It does not, on its own, establish whether the underlying decision was automated, reviewed by a human, or erroneous.

4. Document outcomes

Note whether a response is received, whether it addresses the substance of the notice, and whether any meaningful review or clarification is provided. Lack of response or non-substantive replies are themselves relevant observations.